We are in the process of curating a list of this year’s publications — including links to social media, lab websites, and supplemental material. Currently, we have 86 full papers, 18 posters, one journal paper, one interactive demo, two student mentoring programs and we lead five workshops. Two papers received a best paper award and 9 papers received an honorable mention.

Your publication from 2026 is missing? Please enter the details in this Google Forms and send us an email that you added a publication: contact@germanhci.de

Disclaimer: This list is not complete yet; the DOIs might not be working yet.

“It’s Just a Wild, Wild West”: Harnessing Public Procurement as an AI Governance Mechanism

Anna-Ida Hiding (University of Cambridge), Emma Kallina (RC-Trust, UA Ruhr + University Duisburg-Essen), Jat Singh (RC-Trust, UA Ruhr + University Duisburg-Essen)

Honorable MentionAbstract | Tags: Honorable Mention, Papers | Links:

@inproceedings{Hiding2026ItsJust,

title = {“It’s Just a Wild, Wild West”: Harnessing Public Procurement as an AI Governance Mechanism},

author = {Anna-Ida Hiding (University of Cambridge), Emma Kallina (RC-Trust, UA Ruhr + University Duisburg-Essen), Jat Singh (RC-Trust, UA Ruhr + University Duisburg-Essen)},

url = {https://www.linkedin.com/in/emma-kallina/, author's linkedin},

doi = {10.1145/3772318.3791968},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {Public sector AI has the potential to harm citizens, with risks increasing as its use expands. Recent work positions public procurement as a way to shape public sector AI in line with public interests, using the state’s purchasing power to influence which AI systems are procured and under what conditions. This paper examines how this potential can be realised in practice by drawing on semi-structured interviews with UK and EU buyers, providers, and procurement experts. Our findings result in six promising procurement practices that enable the public sector to shape AI in line with public interests, alongside concrete mechanisms to support their uptake. Further, we find that AI-specific procurement approaches remain immature and systems often enter through informal channels with less scrutiny. We provide directions for both research and practice on how public procurement can be used as a governance mechanism for better aligning AI with public interests.},

keywords = {Honorable Mention, Papers},

pubstate = {published},

tppubtype = {inproceedings}

}

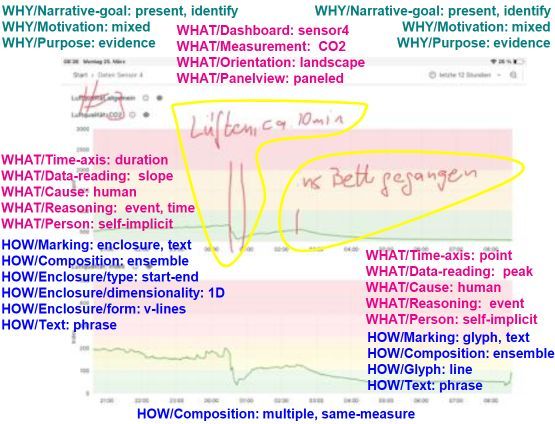

(De)Coding Lay Users' Annotations of Time-series Sensor Data from the Home

Albrecht Kurze (TU Chemnitz), Alexa Becker (HS Anhalt), Jana Bergk (TU Chemnitz), Christin Reuter (TU Chemnitz)

Abstract | Tags: Posters | Links:

@inproceedings{Kurze2026DecodingLay,

title = {(De)Coding Lay Users' Annotations of Time-series Sensor Data from the Home},

author = {Albrecht Kurze (TU Chemnitz), Alexa Becker (HS Anhalt), Jana Bergk (TU Chemnitz), Christin Reuter (TU Chemnitz)},

url = {https://www.tu-chemnitz.de/informatik/mi/, website https://wisskomm.social/@tucmi, lab's social media https://hci.social/@AlbrechtKurze, author's hci.social},

doi = {10.1145/3772363.3798962},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {Annotation through additional graphic and textual elements is a helpful means of enriching visualizations. Previous work analyzing such annotations is primarily focused on infographics created by experts and tends to be generic for many different types of data and visualizations. In this work, we focus on the analysis of annotations of time-series data of simple sensors (e.g. light, temperature) from the smart home created by lay users. We take into account specifics of this context (type of data, home environment, limited expertise) and present the current status of our taxonomy that allows analyzing the WHY, WHAT, and HOW of such annotations. Our taxonomy is intended to analyze a large number of annotations created in smart home field studies. In the long-term this will inform implications for design to support lay users' data interaction with smart home data in sensemaking as well as annotation and data management workflows.},

keywords = {Posters},

pubstate = {published},

tppubtype = {inproceedings}

}

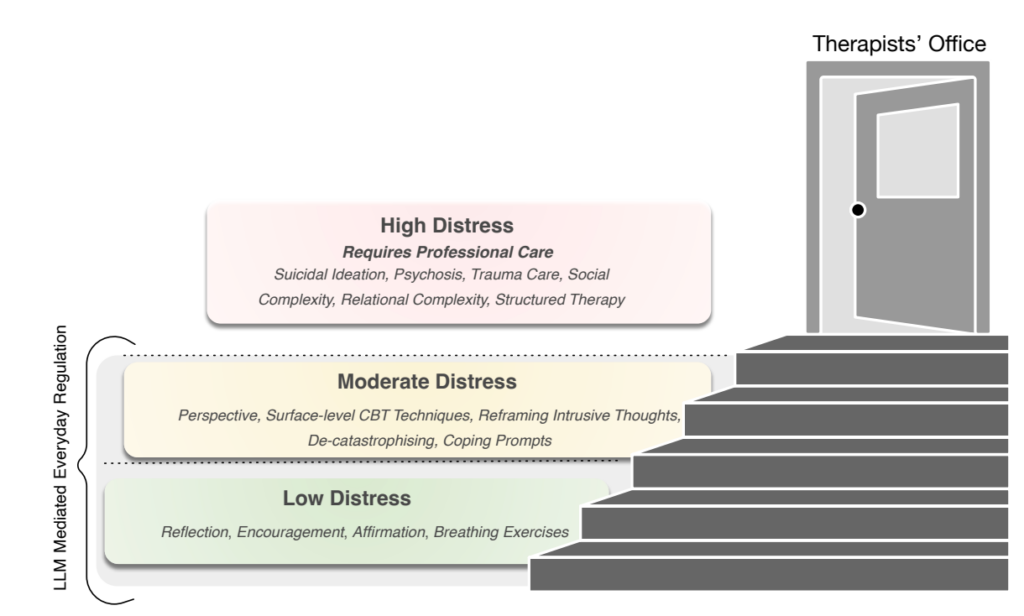

A Conditional Companion: Lived Experiences of People with Mental Health Disorders Using LLMs

Aditya Kumar Purohit (Center for Advanced Internet Studies (CAIS)) , Hendrik Heuer (Center for Advanced Internet Studies (CAIS))

Abstract | Tags: Papers | Links:

@inproceedings{Purohit2026ConditionalCompanion,

title = {A Conditional Companion: Lived Experiences of People with Mental Health Disorders Using LLMs},

author = {Aditya Kumar Purohit (Center for Advanced Internet Studies (CAIS)) , Hendrik Heuer (Center for Advanced Internet Studies (CAIS))},

url = {https://www.linkedin.com/in/adityakumarpurohit/, author's linkedin},

doi = {10.1145/3772318.3791763},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {Large Language Models (LLMs) are increasingly used for mental health support, yet little is known about how people with mental health challenges engage with them, how they evaluate their usefulness, and what design opportunities they envision. We conducted 20 semi-structured interviews with people in the UK who live with mental health conditions and have used LLMs for mental health support. Through reflexive thematic analysis, we found that participants engaged with LLMs in conditional and situational ways: for immediacy, the desire for non-judgement, self-paced disclosure, cognitive reframing, and relational engagement. Simultaneously, participants articulated clear boundaries informed by prior therapeutic experience: LLMs were effective for mild-to-moderate distress but inadequate for crises, trauma, and complex social-emotional situations. We contribute empirical insights into the lived use of LLMs for mental health, highlight boundary-setting as central to their safe role, and propose design and governance directions for embedding them responsibly within care ecosystem},

keywords = {Papers},

pubstate = {published},

tppubtype = {inproceedings}

}

A Tree’s Perspective: Enhancing Nature Connectedness Through Transitional and Multisensory Virtual Reality Experiences

Lisa L. Townsend (TU Dortmund University,), Julian Rasch (LMU Munich), Amy Grech (University of Strathclyde), Bernhard E. Riecke (Simon Fraser University), Sven Mayer (TU Dortmund University, Research Center Trustworthy Data Science, Security)

Honorable MentionAbstract | Tags: Honorable Mention, Papers | Links:

@inproceedings{Townsend2026TreesPerspective,

title = {A Tree’s Perspective: Enhancing Nature Connectedness Through Transitional and Multisensory Virtual Reality Experiences},

author = {Lisa L. Townsend (TU Dortmund University,), Julian Rasch (LMU Munich), Amy Grech (University of Strathclyde), Bernhard E. Riecke (Simon Fraser University), Sven Mayer (TU Dortmund University, Research Center Trustworthy Data Science and Security)},

url = {https://haii.cs.tu-dortmund.de/, website

https://haii.group/, lab's social media

https://www.linkedin.com/in/lisa-townsend-hci/, author's linkedin},

doi = {10.1145/3772318.3790282},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {Embodying natural entities in Virtual Reality (VR) shows potential to enhance nature connectedness, but design factors that support such embodiment remain underexplored. This study examined whether transitional elements in the physical setting before and after VR and multisensory stimuli during VR can strengthen nature connectedness in a transformative tree-embodiment experience. Through a mixed-methods approach (N=20), where we varied the pre- and post-VR experience (Neutral vs. Transitional) and sensory modalities (Audiovisual vs. Multisensory), we found that both transitional and multisensory experiences significantly enhanced presence, embodiment, and nature connectedness, with increases in emotional connectedness sustained one week later. Drawing on interview insights and impact ratings of specific design features, we derive design recommendations for integrating transitional and multisensory elements. Our findings demonstrate the value of holistic design for enhancing the emotional and transformative potential of virtual nature embodiment for fostering environmental awareness.},

keywords = {Honorable Mention, Papers},

pubstate = {published},

tppubtype = {inproceedings}

}

Advancing Co-located and Distributed Multi-user Mixed Reality

Katja Krug (Interactive Media Lab Dresden, TUD Dresden University of Technology)

Abstract | Tags: Student Mentoring Program (SMP) | Links:

@inproceedings{Krug2026AdvancingColocated,

title = {Advancing Co-located and Distributed Multi-user Mixed Reality},

author = {Katja Krug (Interactive Media Lab Dresden, TUD Dresden University of Technology)},

url = {https://imld.de/, website

https://www.linkedin.com/company/iml-dresden/, lab's linkedin

www.linkedin.com/in/katja-krug, author's social media},

doi = {10.1145/3772363.3799196},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {Mixed Reality (MR) enables new forms of social interaction by blending physical and virtual spaces across co-located, distributed, and hybrid settings. In these environments, interpersonal encounters are shaped not only by technical constraints but also by interaction design, perception, and social dynamics. In my research, I investigate key questions across these dimensions and contribute to a deeper understanding of multi-user MR system development and of how people perceive, interact, and engage with one another in shared MR spaces. To this end, we conducted a structured review of design and research challenges in multi-user MR systems, explored interaction strategies that support collaborative activity while preserving social and contextual awareness, and examined how avatar self-views influence communication and attention in multi-user scenarios. My next steps include exploring how MR visualizations can support and mediate social dynamics and collaborative flow in group meeting contexts.},

keywords = {Student Mentoring Program (SMP)},

pubstate = {published},

tppubtype = {inproceedings}

}

AI CHAOS! 2nd Workshop on the Challenges for Human Oversight of AI Systems

Malik Khadar (Department of Computer Science & Engineering, University of Minnesota), Julia Cecil (Department of Psychology, LMU Munich), Leon Van Der Neut (Delft University of Technology), Nikola Banovic (Electrical Engineering, Computer Science, University of Michigan), Dr. Kevin Baum (Center for European Research in Trusted AI (CERTAIN), German Research Center for Artificial Intelligence (DFKI), Saarbrücken), Stevie Chancellor (Computer Science, Engineering, University of Minnesota), Enrico Costanza (UCL Interaction Centre, University College London), Motahhare Eslami (School of Computer Science, Carnegie Mellon University), Anna Maria Feit (Saarland Informatics Campus, Saarland University), Susanne Gaube (Global Business School for Health (GBSH), University College London (UCL)), Ujwal Gadiraju (Web Information Systems, Delft University of Technology), Harmanpreet Kaur (University of Minnesota)

Abstract | Tags: Workshops | Links:

@inproceedings{Khadar2026AiChaos,

title = {AI CHAOS! 2nd Workshop on the Challenges for Human Oversight of AI Systems},

author = {Malik Khadar (Department of Computer Science & Engineering, University of Minnesota), Julia Cecil (Department of Psychology, LMU Munich), Leon Van Der Neut (Delft University of Technology), Nikola Banovic (Electrical Engineering and Computer Science, University of Michigan), Dr. Kevin Baum (Center for European Research in Trusted AI (CERTAIN), German Research Center for Artificial Intelligence (DFKI), Saarbrücken), Stevie Chancellor (Computer Science and Engineering, University of Minnesota), Enrico Costanza (UCL Interaction Centre, University College London), Motahhare Eslami (School of Computer Science, Carnegie Mellon University), Anna Maria Feit (Saarland Informatics Campus, Saarland University), Susanne Gaube (Global Business School for Health (GBSH), University College London (UCL)), Ujwal Gadiraju (Web Information Systems, Delft University of Technology), Harmanpreet Kaur (University of Minnesota)},

url = {https://cix.cs.uni-saarland.de/, website},

doi = {10.1145/3772363.3778736},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {As AI systems are increasingly adopted in high-stakes domains such as healthcare, autonomous driving, and criminal justice, their failures may threaten human safety and rights. Human oversight of AI systems is therefore critically important as a potential safeguard to prevent harmful consequences in high-risk AI applications. The global regulatory and policy landscape for AI governance remains understandably fragmented and diverse. While frameworks like the European AI Act require human oversight for high-risk AI systems, there is currently a lack of well-defined methodologies and conceptual clarity to operationalize such oversight effectively. Independent of policy and regulation, poorly designed oversight can create dangerous illusions of safety while obscuring accountability. This interdisciplinary workshop aims to bring together researchers from various disciplines, including AI, HCI, psychology, law, and policy, to address this critical gap. We will explore the following questions: (1) What are the greatest challenges to achieving effective human oversight of AI systems? (2) How can we design AI systems that enable meaningful human oversight? (3) How do we assign responsibilities to and support the various stakeholders involved in oversight? Through talks and interactive group discussions, participants will identify oversight challenges; examine stakeholder roles; discuss supporting tools, methods, and regulatory frameworks; and establish a collaborative research agenda. Our central goal is to further a roadmap that enables effective human oversight for the responsible deployment of AI in society.},

keywords = {Workshops},

pubstate = {published},

tppubtype = {inproceedings}

}

AI-Supported Electrocardiogram Interpretation: The Effect of Support Presentation on Diagnostic Accuracy, Psychological Need Satisfaction, and Diagnosis Time

Tobias Grundgeiger (Julius-Maximilians-Universität Würzburg, Germany), Louisa Maurer (Julius-Maximilians-Universität Würzburg, Germany), Carlos Ramon Hölzing (University Hospital Würzburg, Germany), Oliver Happel (University Hospital Würzburg, Germany),

Abstract | Tags: Papers | Links:

@inproceedings{Grundgeiger2026AisupportedElectrocardiogram,

title = {AI-Supported Electrocardiogram Interpretation: The Effect of Support Presentation on Diagnostic Accuracy, Psychological Need Satisfaction, and Diagnosis Time},

author = {Tobias Grundgeiger (Julius-Maximilians-Universität Würzburg, Germany), Louisa Maurer (Julius-Maximilians-Universität Würzburg, Germany), Carlos Ramon Hölzing (University Hospital Würzburg, Germany), Oliver Happel (University Hospital Würzburg, Germany),},

url = {https://www.mcm.uni-wuerzburg.de/psyergo/, website},

doi = {10.1145/3772318.3790619},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {Interpreting electrocardiograms (ECGs) is an important but complex and error-prone task. While diagnostic support algorithms exist, how support is displayed and how clinicians interact with ECG diagnostic and clinical decision support systems in general remain underexplored. In this preregistered experiment, we studied how providing clinicians with different versions of diagnostic support affects ECG interpretation. All four support types improved diagnosis accuracy compared to a no-support control condition, but the most effective was support offering visual ECG trace markings. User experience, in the form of psychological need satisfaction of competence and security, was highest when clinicians first viewed the ECG independently and then received support in a second stage. The latter two-stage support also resulted in the shortest diagnosis times. We conclude with design and research implications for creating clinician-algorithmic support interactions that improve user experience, efficacy, and effectiveness in the present study, and may ultimately contribute to patient safety.},

keywords = {Papers},

pubstate = {published},

tppubtype = {inproceedings}

}

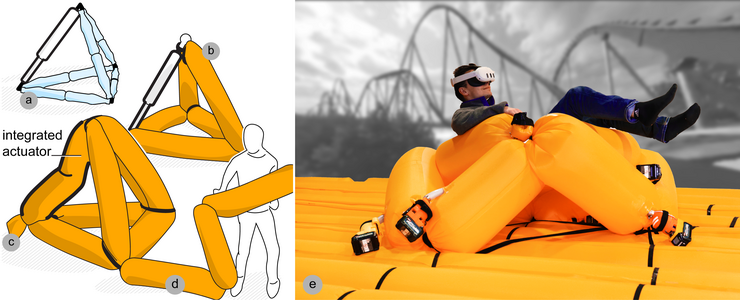

AirForce: Personal Fabrication of Large-Scale, Load-Bearing Animatronics Structures from a Single Tube

Lukas Rambold (Hasso-Plattner-Institut), Robert Kovacs (Hasso-Plattner-Institut), Min Deng (Hasso-Plattner-Institut), Antonius Naumann (Hasso-Plattner-Institut), Konrad Gerlach (Hasso-Plattner-Institut), Horatio Hamkins (Hasso-Plattner-Institut), Helena Lendowski (Hasso-Plattner-Institut), Chiao Fang (Hasso-Plattner-Institut), Shohei Katakura (Hasso-Plattner-Institut), Conrad Lempert (Hasso-Plattner-Institut), Muhammad Abdullah (Hasso-Plattner-Institut), Patrick Baudisch (Hasso-Plattner-Institut)

Abstract | Tags: Papers | Links:

@inproceedings{Rambold2026Airforce,

title = {AirForce: Personal Fabrication of Large-Scale, Load-Bearing Animatronics Structures from a Single Tube},

author = {Lukas Rambold (Hasso-Plattner-Institut), Robert Kovacs (Hasso-Plattner-Institut), Min Deng (Hasso-Plattner-Institut), Antonius Naumann (Hasso-Plattner-Institut), Konrad Gerlach (Hasso-Plattner-Institut), Horatio Hamkins (Hasso-Plattner-Institut), Helena Lendowski (Hasso-Plattner-Institut), Chiao Fang (Hasso-Plattner-Institut), Shohei Katakura (Hasso-Plattner-Institut), Conrad Lempert (Hasso-Plattner-Institut), Muhammad Abdullah (Hasso-Plattner-Institut), Patrick Baudisch (Hasso-Plattner-Institut)},

url = {hpi.de/baudisch, website

https://www.linkedin.com/in/ramboldio/, author's linkedin},

doi = {10.1145/3772318.3791706},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {We present a fabrication system called AirForce that allows users to create large-scale, load-bearing animated structures from a single inflatable tube. AirForce builds on the personal fabrication of animated truss structures, based on which it replaces not only the static elements with tube, but also introduces tube-based actuators that integrate with that same tube. This ‘single-tube’ design affords efficient single-person assembly, excellent power-to-weight ratio, easy transport and setup, and 100% material reuse. We show three variants of actuators: buckling actuators for pushing, muscle actuators for pulling, and telescoping actuators for large forces. Our blender plugin enables users to place actuators in structures and export instructions for efficient fabrication. We demonstrate a 6DOF motion platform that lifts humans and an 8m high animatronic T-rex that animates with 3DOF, enabled by custom hardware components. In our technical evaluation, the three actuators delivered 480N, 1420N, and 2330N peak forces, respectively.},

keywords = {Papers},

pubstate = {published},

tppubtype = {inproceedings}

}

Anticipating Physical Processes in VR: Environment Type and Scale Alter Temporal Expectations

Martin Riemer (Technical University Berlin), Elisa Valletta (University of Regensburg), David Halbhuber (University of Regensburg), Johanna Bogon (University of Regensburg)

Abstract | Tags: Papers | Links:

@inproceedings{Riemer2026AnticipatingPhysical,

title = {Anticipating Physical Processes in VR: Environment Type and Scale Alter Temporal Expectations},

author = {Martin Riemer (Technical University Berlin), Elisa Valletta (University of Regensburg), David Halbhuber (University of Regensburg), Johanna Bogon (University of Regensburg)},

url = {https://www.uni-regensburg.de/informatik-data-science/fakultaet/einrichtungen/medieninformatik, website

https://www.linkedin.com/in/elisa-valletta-041b3939b/, author's linkedin},

doi = {10.1145/3772318.3791767},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {Accurate temporal expectations support interaction in virtual reality (VR), yet it remains unclear whether the internal models that guide such expectations in the real world transfer unchanged to immersive VR. We report two experiments examining expected durations of gravity-driven motion across real and virtual environments. In Experiment 1, participants imagined a ball rolling down ramps in a physical lab, a 1:1 VR replica, and an up-scaled VR room and produced the time the imagined process would take. Results revealed systematic distortions: durations were underestimated in VR relative to the physical lab, and larger virtual spaces elicited longer durations. Experiment 2 assessed whether participants incorporated gravity laws into their simulations. Although gravitational acceleration was consistently underestimated, it was incorporated in both real and virtual environments. Our findings show that VR and its spatial scale bias temporal expectations, with implications for the design of temporally coherent and physically plausible VR experiences.},

keywords = {Papers},

pubstate = {published},

tppubtype = {inproceedings}

}

Anticipation Before Action: EEG-Based Implicit Intent Detection for Adaptive Gaze Interaction in Mixed Reality

Francesco Chiossi (LMU Munich, Munich, Germany), Elnur Imamaliyev (Department of Neuroscience, Carl von Ossietzky Universität Oldenburg, Oldenburg, Germany), Martin Bleichner (Department of Psychology, Carl von Ossietzky Universität Oldenburg, Oldenburg, Oldenburg, Germany), Sven Mayer (TU Dortmund University, Dortmund, Germany, info@sven-mayer.com Research Center Trustworthy Data Science, Security, Dortmund, Germany)

Abstract | Tags: Papers | Links:

@inproceedings{Chiossi2026AnticipationBefore,

title = {Anticipation Before Action: EEG-Based Implicit Intent Detection for Adaptive Gaze Interaction in Mixed Reality},

author = {Francesco Chiossi (LMU Munich, Munich, Germany), Elnur Imamaliyev (Department of Neuroscience, Carl von Ossietzky Universität Oldenburg, Oldenburg, Germany), Martin Bleichner (Department of Psychology, Carl von Ossietzky Universität Oldenburg, Oldenburg, Oldenburg, Germany), Sven Mayer (TU Dortmund University, Dortmund, Germany, info@sven-mayer.com Research Center Trustworthy Data Science and Security, Dortmund, Germany)},

url = {https://www.medien.ifi.lmu.de/, website

https://www.linkedin.com/company/lmu-media-informatics-group/posts/?feedView=all, lab's linkedin

https://www.linkedin.com/in/francescochiossi/, author's linkedin

https://www.francesco-chiossi-hci.com/, social media},

doi = {10.1145/3772318.3790523},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {Mixed Reality (MR) interfaces increasingly rely on gaze for interaction, yet distinguishing visual attention from intentional action remains difficult, leading to the Midas Touch problem. Existing solutions require explicit confirmations, while brain–computer interfaces may provide an implicit marker of intention using Stimulus-Preceding Negativity (SPN). We investigated how Intention (Select vs. Observe) and Feedback (With vs. Without) modulate SPN during gaze-based MR interactions. During realistic selection tasks, we acquired EEG and eye-tracking data from 28 participants. SPN was robustly elicited and sensitive to both factors: observation without feedback produced the strongest amplitudes, while intention to select and expectation of feedback reduced activity, suggesting SPN reflects anticipatory uncertainty rather than motor preparation. Complementary decoding with deep learning models achieved reliable person-dependent classification of user intention, with accuracies ranging from 75% to 97% across participants. These findings identify SPN as an implicit marker for building intention-aware MR interfaces that mitigate the Midas Touch.},

keywords = {Papers},

pubstate = {published},

tppubtype = {inproceedings}

}

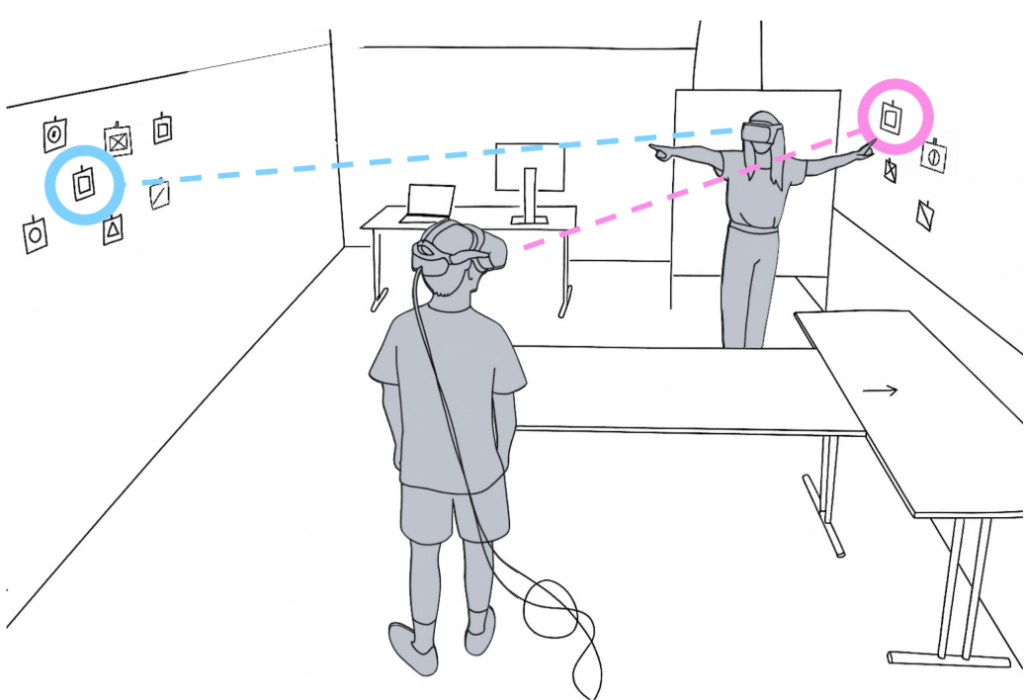

Anticipation Without Acceleration: Benefits of Shared Gaze in Collocated Augmented Reality Collaboration

Julian Rasch (LMU Munich), Vladislav Dmitrievic Rusakov (LMU Munich), Jan Leusmann (LMU Munich), Florian Müller (TU Darmstadt), Albrecht Schmidt (LMU Munich)

Abstract | Tags: Papers | Links:

@inproceedings{Rasch2026AnticipationWithout,

title = {Anticipation Without Acceleration: Benefits of Shared Gaze in Collocated Augmented Reality Collaboration},

author = {Julian Rasch (LMU Munich), Vladislav Dmitrievic Rusakov (LMU Munich), Jan Leusmann (LMU Munich), Florian Müller (TU Darmstadt), Albrecht Schmidt (LMU Munich)},

url = {https://www.medien.ifi.lmu.de/index.xhtml, website

https://www.linkedin.com/company/lmu-media-informatics-group/, lab's linkedin

https://de.linkedin.com/in/julian-rasch, author's linkedin},

doi = {10.1145/3772318.3791758},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {Knowing what collaborators attend to is essential. Previous studies demonstrated that shared gaze enhances coordination and social connectedness in remote settings. In collocated settings, gaze can be both naturally observable and technologically augmented. AR enables gaze cues to be rendered explicitly in the environment. To investigate if and how such cues are beneficial in collocated AR collaboration, we examined both qualitative and quantitative effects across three task types (puzzle, negotiation, search) and two spatial setups (plane, room), focusing on task completion time and the collaborative experience. In our user study with 24 dyads (n=48), we varied gaze visibility and measured task performance, user preference, social connectedness, and shared attention. Our results show that sharing gaze in collocated collaborative AR can increase shared attention, is perceived as helpful, and improves the user experience, similar to remote collaboration, but has a limited impact on the actual task completion time across the chosen tasks.},

keywords = {Papers},

pubstate = {published},

tppubtype = {inproceedings}

}

AR-Cues for Task Resumption Change Users’ Strategy for Dealing with Deferrable Interruptions

Kilian Bahnsen (Julius-Maximilians-Universität Würzburg, Germany), Tobias Grundgeiger (Julius-Maximilians-Universität Würzburg, Germany)

Abstract | Tags: Papers | Links:

@inproceedings{Bahnsen2026ArcuesTask,

title = {AR-Cues for Task Resumption Change Users’ Strategy for Dealing with Deferrable Interruptions},

author = {Kilian Bahnsen (Julius-Maximilians-Universität Würzburg, Germany), Tobias Grundgeiger (Julius-Maximilians-Universität Würzburg, Germany)},

url = {https://www.mcm.uni-wuerzburg.de/psyergo/, website},

doi = {10.1145/3772318.3790673},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {When given the opportunity, people tend to try to reach coarse breakpoints for work interruptions. Coarse breakpoints are frequently associated with less effort when resuming the task. We investigated how supporting task resumption with augmented reality (AR)-cues affects this behavior. In a mixed factorial experiment, 50 participants performed a physical sorting task that included deferrable interruptions with varying distances to a coarse breakpoint, either with or without an AR-cue indicating the next correct step after interruption. Participants with AR-cue accepted interruptions at fine breakpoints more frequently than those without a cue, except when the coarse breakpoint was one step away, and reported less stress. Our findings indicate that AR-cues attenuate but do not eliminate the need for specific task resumption strategies, such as reaching a coarse breakpoint, and reduce the stress. Considering AR-cues for task resumption may be particularly beneficial for time-critical interruptions and fast-paced work environments.},

keywords = {Papers},

pubstate = {published},

tppubtype = {inproceedings}

}

AttentiveLearn: Personalized Post-Lecture Support for Gaze-Aware Immersive Learning

Shi Liu (KIT, h-lab), Martin Feick (KIT, h-lab), Linus Bierhoff (KIT, h-lab), Alexander Maedche (KIT, h-lab)

Abstract | Tags: Papers | Links:

@inproceedings{Liu2026Attentivelearn,

title = {AttentiveLearn: Personalized Post-Lecture Support for Gaze-Aware Immersive Learning},

author = {Shi Liu (KIT, h-lab), Martin Feick (KIT, h-lab), Linus Bierhoff (KIT, h-lab), Alexander Maedche (KIT, h-lab)},

url = {https://h-lab.win.kit.edu/, website},

doi = {10.1145/3772318.3790667},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {Immersive learning environments such as virtual classrooms in Virtual Reality (VR) offer learners unique learning experiences, yet providing effective learner support remains a challenge. While prior HCI research has explored in-lecture support for immersive learning, little research has been conducted to provide post-lecture support, despite being critical for sustained motivation, engagement, and learning outcomes. To address this, we present AttentiveLearn, a learning ecosystem that generates personalized quizzes on a mobile learning assistant based on learners’ attention distribution inferred using eye-tracking in VR lectures. We evaluated the system in a four-week field study with 36 university students attending lectures on Bayesian data analysis. AttentiveLearn improved learners’ reported motivation and engagement, without conclusive evidence of learning gains. Meanwhile, anecdotal evidence suggested improvements in attention for certain participants over time. Based on our findings of the field study, we provide empirical insights and design implications for personalized post-lecture support for immersive learning systems.},

keywords = {Papers},

pubstate = {published},

tppubtype = {inproceedings}

}

Augmented Body Parts: Bridging VR Embodiment and Wearable Robotics

HyeonBeom Yi (Electronics, Telecommunications Research Institute, Daejeon, Republic of Korea), Myung Jin (MJ) Kim (Electronics, Telecommunications Research Institute, Daejeon, Republic of Korea), Seungwoo Je (Southern University of Science, Technology, Shenzhen, China), Seungjae Oh (Kyung Hee University, Yongin, Republic of Korea), Shuto Takashita (University of Tokyo, Tokyo, Japan), Hongyu Zhou (University of Sydney, Sydney, Australia), Marie Muehlhaus (Saarland University, Saarbrücken, Germany), Dr. Eyal Ofek (University of Birmingham, Birmingham, United Kingdom), Andrea Bianchi (KAIST, Daejeon, Republic of Korea)

Abstract | Tags: Workshops | Links:

@inproceedings{Yi2026AugmentedBody,

title = {Augmented Body Parts: Bridging VR Embodiment and Wearable Robotics},

author = {HyeonBeom Yi (Electronics and Telecommunications Research Institute, Daejeon, Republic of Korea), Myung Jin (MJ) Kim (Electronics and Telecommunications Research Institute, Daejeon, Republic of Korea), Seungwoo Je (Southern University of Science and Technology, Shenzhen, China), Seungjae Oh (Kyung Hee University, Yongin, Republic of Korea), Shuto Takashita (University of Tokyo, Tokyo, Japan), Hongyu Zhou (University of Sydney, Sydney, Australia), Marie Muehlhaus (Saarland University, Saarbrücken, Germany), Dr. Eyal Ofek (University of Birmingham, Birmingham, United Kingdom), Andrea Bianchi (KAIST, Daejeon, Republic of Korea)},

url = {https://hci.cs.uni-saarland.de, website

https://www.linkedin.com/company/saarhcilab/, lab's linkedin},

doi = {10.1145/3772363.3778688},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {Recent work across HCI/HRI and wearable robotics has investigated how people control and perceive extra body parts in both virtual and physical settings. Virtual embodiment in XR has shown that users can experience ownership and agency with non-anthropomorphic avatars, while wearable robotics has introduced supernumerary limbs such as third arms and robotic tails. Despite these shared goals, connections between findings remain limited because VR and hardware studies rely on different assumptions about sensory feedback, human perception, and physical constraints, making insights difficult to transfer across contexts. This workshop brings together researchers in XR, wearable robotics, haptics, and neuroscience to explore how to foster embodiment and adaptation with augmented body parts, and how to bridge virtual embodiment to effective use with wearable devices. Through a keynote, brief position shares, and two hands-on group activities, participants will examine control mappings and sensory-feedback strategies and identify which aspects of VR-based embodiment realistically transfer when accounting for hardware limits, sensor variability, and cognitive load. Ultimately, the workshop aims to articulate a focused research agenda connecting VR-based insights to feasible wearable robotics implementations, enabling future work on augmenting the human body with new parts and capabilities.},

keywords = {Workshops},

pubstate = {published},

tppubtype = {inproceedings}

}

Augmenting Imagery with Multimodal Vibrotactile Representations: Touch, Feel, and Hear

Mazen Salous (OFFIS Institute for Information Technology, Oldenburg, Germany), Matthias Kramer (OFFIS Institute for Information Technology, Oldenburg, Germany), Wilko Heuten (OFFIS Institute for Information Technology, Oldenburg, Germany), Charles Hudin (CEA Tech, Gif Sur Yvettes, France), Susanne Boll (University of Oldenburg, Oldenburg, Germany), Larbi Abdenebaoui (OFFIS Institute for Information Technology, Oldenburg, Germany)

Abstract | Tags: Papers | Links:

@inproceedings{Salous2026AugmentingImagery,

title = {Augmenting Imagery with Multimodal Vibrotactile Representations: Touch, Feel, and Hear},

author = {Mazen Salous (OFFIS Institute for Information Technology, Oldenburg, Germany), Matthias Kramer (OFFIS Institute for Information Technology, Oldenburg, Germany), Wilko Heuten (OFFIS Institute for Information Technology, Oldenburg, Germany), Charles Hudin (CEA Tech, Gif Sur Yvettes, France), Susanne Boll (University of Oldenburg, Oldenburg, Germany), Larbi Abdenebaoui (OFFIS Institute for Information Technology, Oldenburg, Germany)},

url = {https://www.offis.de/, website

https://www.linkedin.com/showcase/offis-science, lab's linkedin

https://www.linkedin.com/in/dr-mazen-salous-a2b84225/, author's linkedin},

doi = {10.1145/3772318.3791493},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {Digital images remain largely inaccessible to blind or visually impaired (BVI) people because alt-text rarely conveys how %objects For-TAPS - materials materials feel or sound. We augment material images with multimodal vibrotactile patterns and evaluate four generation pipelines. AP1: prompt with one-shot example, AP2: prompt to audio, then pattern, AP3: real finger–material recording to pattern, and AP4: patterns from a public haptic database. A custom multilocal vibrotactile tablet played patterns on 10 material images (e.g., wood, stone, glass). Eight BVI participants explored each image with four patterns and ranked the best match. Think-aloud feedback highlighted: Theme 1 (realism — rough/grainy for wood and stone; smooth/steady for glass), Theme 2 (distinctiveness — separable cues; uniform buzzes were criticized), Theme 3 (personal associations), Theme 4 (effort/calibration for faint/noisy patterns; intensity tuning), and Theme 5 (preferences/suggestions). Exploratory ranks (n=8) echoed hybrid, user-tunable pipelines for accessible material perception.},

keywords = {Papers},

pubstate = {published},

tppubtype = {inproceedings}

}

Balancing Accuracy and Embodiment: A Hybrid Perspective for Complex Visuomotor Tasks in VR

Dennis Dietz (LMU Munich), Sebastian Walz (LMU Munich), Sven Mayer (TU Dortmund University), Andreas Butz (LMU Munich), Matthias Hoppe (Keio University Graduate School of Media Design)

Abstract | Tags: Papers | Links:

@inproceedings{Dietz2026BalancingAccuracy,

title = {Balancing Accuracy and Embodiment: A Hybrid Perspective for Complex Visuomotor Tasks in VR},

author = {Dennis Dietz (LMU Munich), Sebastian Walz (LMU Munich), Sven Mayer (TU Dortmund University), Andreas Butz (LMU Munich), Matthias Hoppe (Keio University Graduate School of Media Design)},

url = {https://www.medien.ifi.lmu.de/, website},

doi = {10.1145/3772318.3791472},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {Visual perspective is a crucial design factor in Virtual Reality (VR). Especially when complex motor tasks are involved, it can affect both objective performance and subjective experience. We compared four visual perspectives (First-Person view, translucent Ghost view, Third-Person view, and Hybrid view) in a user study (N=20) involving different difficulties in a balancing game. Our findings reveal complex tradeoffs between the sense of embodiment, performance, and preference: The preferred Hybrid perspective offered a significant stability advantage for low task difficulty. However, this benefit vanished with increasing physical demand, revealing a speed-accuracy trade-off where external views required longer completion times. Ego-centric perspectives (First and Ghost) induced a stronger sense of embodiment and presence, but were less preferred. Participants' choice was not determined by representational fidelity but by pragmatic considerations of perceived utility. As perceived effectiveness can overrule objective performance and subjective experience, the choice of perspective is an important consideration for future training and rehabilitation applications in VR.},

keywords = {Papers},

pubstate = {published},

tppubtype = {inproceedings}

}

Balancing Automation and Discretion: How Decision Stakes and Human-AI Collaboration Affect Citizen Perceptions in Public Administration

Saja Aljuneidi (OFFIS - Institute for Information Technology),, Wilko Heuten (OFFIS - Institute for Information Technology),, Zhamilya Bilyalova (Wellesley College),, Maria K Wolters (OFFIS - Institute for Information Technology),, Susanne Boll (University of Oldenburg)

Abstract | Tags: Papers | Links:

@inproceedings{Aljuneidi2026BalancingAutomation,

title = {Balancing Automation and Discretion: How Decision Stakes and Human-AI Collaboration Affect Citizen Perceptions in Public Administration},

author = {Saja Aljuneidi (OFFIS - Institute for Information Technology),, Wilko Heuten (OFFIS - Institute for Information Technology),, Zhamilya Bilyalova (Wellesley College),, Maria K Wolters (OFFIS - Institute for Information Technology),, Susanne Boll (University of Oldenburg)},

url = {https://hci.uni-oldenburg.de/, website

https://vimeo.com/1161420158?share=copy&fl=sv&fe=ci, teaser video

www.linkedin.com/in/saljuneidi, author's social media},

doi = {10.1145/3772318.3790795},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {The growing use of AI in public administration improves efficiency, yet its use in discretionary decisions raises concerns about fairness and legitimacy. While prior research examined decision stakes and Human–AI decision-making configurations separately, their combined effect on citizens’ perceptions of fairness and adoption remains underexplored. We conducted a mixed-method Wizard-of-Oz study (n=43) using an Intelligent-Self-Service-Kiosk. Participants completed a low-stakes (ID renewal) and a high-stakes (social housing) task under one of three decision-making configurations: AI alone, AI with human-supervision, and human with AI advice or recommendation. Quantitative analysis found no significant effects, highlighting the limits of standard metrics. However, qualitative interviews revealed that citizens valued human involvement, requiring meaningful over symbolic oversight. They emphasized interactive dialogue before decisions to capture their circumstances and after, to facilitate appeals. We contribute evidence of tensions between citizens’ desire for efficiency and need for human-control and fairness. We provide guidance for designing citizen-centered AI systems that align with democratic values.},

keywords = {Papers},

pubstate = {published},

tppubtype = {inproceedings}

}

Beyond Links: Exploring Visual Representations of Multi-View Relations in Mixed Reality

Weizhou Luo (Interactive Media Lab Dresden, TUD Dresden University of Technology, Dresden, Germany), Rufat Rzayev (Interactive Media Lab Dresden, TUD Dresden University of Technology, Dresden, Germany), Benjamin Russig (Computer Graphics, Visualization, TUD Dresden University of Technology, Dresden, Germany), Sivanon Visutarporn (Interactive Media Lab Dresden, TUD Dresden University of Technology, Dresden, Germany), Marc Satkowski (Fraunhofer Institute for Process Engineering, Packaging IVV, Dresden, Germany), Stefan Gumhold (Computer Graphics, Visualization, TUD Dresden University of Technology, Dresden, Germany), Raimund Dachselt (Interactive Media Lab Dresden, TUD Dresden University of Technology, Dresden, Germany)

Abstract | Tags: Papers | Links:

@inproceedings{Luo2026BeyondLinks,

title = {Beyond Links: Exploring Visual Representations of Multi-View Relations in Mixed Reality},

author = {Weizhou Luo (Interactive Media Lab Dresden, TUD Dresden University of Technology, Dresden, Germany), Rufat Rzayev (Interactive Media Lab Dresden, TUD Dresden University of Technology, Dresden, Germany), Benjamin Russig (Computer Graphics and Visualization, TUD Dresden University of Technology, Dresden, Germany), Sivanon Visutarporn (Interactive Media Lab Dresden, TUD Dresden University of Technology, Dresden, Germany), Marc Satkowski (Fraunhofer Institute for Process Engineering and Packaging IVV, Dresden, Germany), Stefan Gumhold (Computer Graphics and Visualization, TUD Dresden University of Technology, Dresden, Germany), Raimund Dachselt (Interactive Media Lab Dresden, TUD Dresden University of Technology, Dresden, Germany)},

url = {https://imld.de/en/, website

https://www.linkedin.com/company/iml-dresden/, lab's linkedin

linkedin.com/in/weizhou-luo-8ab457bb, author's social media},

doi = {10.1145/3772318.3791398},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

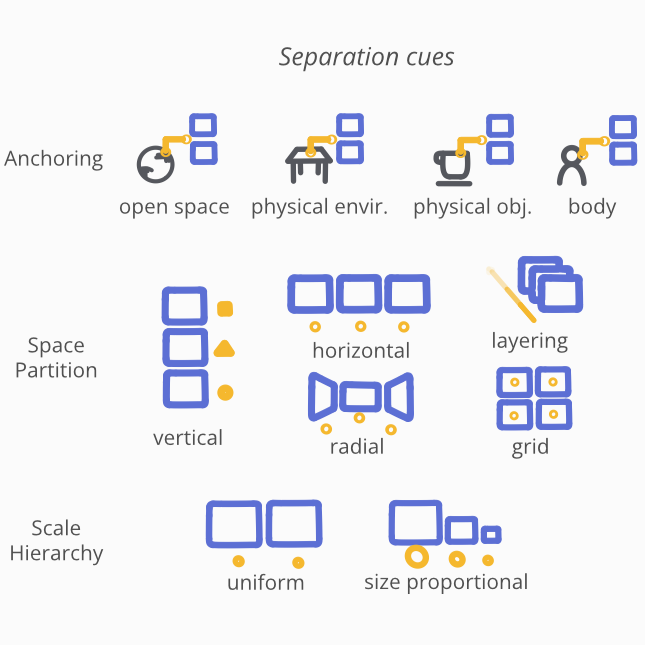

abstract = {This paper investigates associations, explicit representations of relations between multiple views in Mixed Reality (MR). While research on 2D desktop environments offers extensive recommendations for communicating relations between multiple views, MR environments lack such systematic guidance, necessitating adapted solutions that consider their spatial affordances. To address this gap, we systematically explored association techniques in existing research. Building on established 2D multi-view literature and refining insights from prior design principles, we developed a codebook to describe view relations and their representations. Applying it to a corpus of 44 immersive multi-view approaches, we identified recurring design strategies and synthesized them into a design space of visual association techniques adapted for immersive contexts. Based on a lightweight prototyping framework, we validate the utility of the design space through three envisioning scenarios, demonstrating how associations can support exploration, coordination, and sensemaking in MR applications. Our results inform the design of MR multi-view environments.},

keywords = {Papers},

pubstate = {published},

tppubtype = {inproceedings}

}

BotaXplore: Enhancing Visitor Engagement and Learning in Botanical Gardens Through Mobile Technology

Albin Zeqiri (Ulm University), Tobias Wagner (Ulm University), Johanna Grüneberg (LMU Munich), Enrico Rukzio (Ulm University)

Abstract | Tags: Posters | Links:

@inproceedings{Zeqiri2026Botaxplore,

title = {BotaXplore: Enhancing Visitor Engagement and Learning in Botanical Gardens Through Mobile Technology},

author = {Albin Zeqiri (Ulm University), Tobias Wagner (Ulm University), Johanna Grüneberg (LMU Munich), Enrico Rukzio (Ulm University)},

url = {https://www.uni-ulm.de/in/mi/hci/, website

https://az16.github.io/, author's social media

https://wgnrto.de/, author's social media},

doi = {10.1145/3772363.3799272},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {Educational guided visits in botanical gardens offer valuable opportunities for learning and engagement that promote awareness of the importance of biological diversity, its conservation, and sustainable use. However, a focus group with five botanists identified challenges in designing tours for heterogeneous audiences that foster curiosity and interest, as well as in tailoring educational content. To address these aspects, this paper presents BotaXplore, a prototype mobile application that supports plant exploration and learning in botanical gardens through three modes: exploratory, semi-guided, and tour-based. Using photo-based identification, users access short facts and quizzes about plants, and discovered species are added to a personal collection. Building on this prototype, we plan to evaluate the app's impact on nature engagement and learning outcomes after improving learning paths, content generation, and support for collaborative exploration.},

keywords = {Posters},

pubstate = {published},

tppubtype = {inproceedings}

}

Challenges in Synchronous & Remote Collaboration Around Visualization

Matthew Brehmer (University of Waterloo), Maxime Cordeil (University of Queensland), Christophe Hurter (Université de Toulouse), Takayuki Itoh (Ochanomizu University), Wolfgang Büschel (University of Stuttgart), Mahmood Jasim (Louisiana State University), Arnaud Prouzeau (Université Paris-Saclay), David Saffo (J.P. Morgan Chase & Co.), Lyn Bartram (Simon Fraser University), Sheelagh Carpendale (Simon Fraser University), Chen Zhu-Tian (University of Minnesota-Twin Cities), Andrew Cunningham (University of South Australia), Tim Dwyer (Monash University), Samuel Huron (Institut Polytechnique de Paris), Masahiko Itoh (Hokkaido Information University), Alark Joshi (University of San Francisco), Kiyoshi Kiyokawa (Nara Institute of Science, Technology), Hideaki Kuzuoka (University of Tokyo), Bongshin Lee (Yonsei University), Gabriela Molina León (Aarhus University), Harald Reiterer (University of Konstanz), Bektur Ryskeldiev (Mercari R4D), Jonathan Schwabish (Urban Institute), Brian A. Smith (Columbia University), Yasuyuki Sumi (Future University Hakodate), Ryo Suzuki (University of Colorado Boulder), Anthony Tang (Singapore Management University), Yalong Yang (Georgia Institute of Technology), Jian Zhao (University of Waterloo)

Abstract | Tags: Papers | Links:

@publication{Brehmer2026ChallengesSynchronous,

title = {Challenges in Synchronous & Remote Collaboration Around Visualization},

author = {Matthew Brehmer (University of Waterloo), Maxime Cordeil (University of Queensland), Christophe Hurter (Université de Toulouse), Takayuki Itoh (Ochanomizu University), Wolfgang Büschel (University of Stuttgart), Mahmood Jasim (Louisiana State University), Arnaud Prouzeau (Université Paris-Saclay), David Saffo (J.P. Morgan Chase & Co.), Lyn Bartram (Simon Fraser University), Sheelagh Carpendale (Simon Fraser University), Chen Zhu-Tian (University of Minnesota-Twin Cities), Andrew Cunningham (University of South Australia), Tim Dwyer (Monash University), Samuel Huron (Institut Polytechnique de Paris), Masahiko Itoh (Hokkaido Information University), Alark Joshi (University of San Francisco), Kiyoshi Kiyokawa (Nara Institute of Science and Technology), Hideaki Kuzuoka (University of Tokyo), Bongshin Lee (Yonsei University), Gabriela Molina León (Aarhus University), Harald Reiterer (University of Konstanz), Bektur Ryskeldiev (Mercari R4D), Jonathan Schwabish (Urban Institute), Brian A. Smith (Columbia University), Yasuyuki Sumi (Future University Hakodate), Ryo Suzuki (University of Colorado Boulder), Anthony Tang (Singapore Management University), Yalong Yang (Georgia Institute of Technology), Jian Zhao (University of Waterloo)},

url = {http://hci.uni-konstanz.de, website

https://www.linkedin.com/company/105489067/admin/page-posts/published/, lab's linkedin},

doi = {10.1145/3772318.3791117},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {We characterize 16 challenges faced by those investigating and developing remote and synchronous collaborative experiences around visualization. Our work reflects the perspectives and prior research efforts of an international group of 29 experts from across human-computer interaction and visualization sub-communities. The challenges are anchored around five collaborative activities that exhibit a centrality of visualization and multimodal communication. These activities include exploratory data analysis, creative ideation, visualization-rich presentations, joint decision making grounded in data, and real-time data monitoring. The challenges also reflect the changing dynamics of these activities in the face of recent advances in extended reality (XR) and artificial intelligence (AI). As an organizing scheme for future research at the intersection of visualization and computer-supported cooperative work, we align the challenges with a sequence of four sets of research and development activities: technological choices, social factors, AI assistance, and evaluation.},

keywords = {Papers},

pubstate = {published},

tppubtype = {publication}

}

Characterising Gaming Group Experiences

Daniel Reis (Universidade de Lisboa), Kathrin Gerling (KIT), André Rodrigues (Universidade de Lisboa)

Abstract | Tags: Papers | Links:

@inproceedings{Reis2026CharacterisingGaming,

title = {Characterising Gaming Group Experiences},

author = {Daniel Reis (Universidade de Lisboa), Kathrin Gerling (KIT), André Rodrigues (Universidade de Lisboa)},

url = {https://hci.iar.kit.edu, website},

doi = {10.1145/3772318.3791223},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {When people play digital games together, their experiences are often influenced by the group. While prior research has focused on the individual player experience, we argue that a deeper understanding of group dynamics is required for designing digital games that effectively support complex social interactions. In this paper, we characterise the lived group experiences of fifteen long-term players, using qualitative content analysis of semi-structured interviews examining group lifecycles, their impact on play, and how games and platforms support or constrain them. Our findings show that gaming groups are diverse, often shifting between people- and task-orientation based on needs and motivations. They influence how games are experienced, establishing shared practices that persist across contexts. Yet, while games and tools support group play, they often lack flexibility to accommodate such evolving and nuanced social dynamics. We provide insight into how group-based play unfolds and examples of how games can better support it.},

keywords = {Papers},

pubstate = {published},

tppubtype = {inproceedings}

}

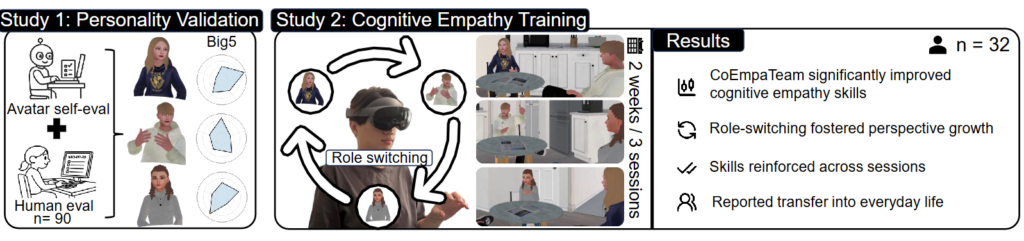

CoEmpaTeam: Enhancing Cognitive Empathy using LLM-based Avatars and Dynamic Role Play in Virtual Reality

Dehui Kong (Human-Centered Systems Lab (h-lab), Karlsruhe Institute of Technology (KIT)), Martin Feick (Human-Centered Systems Lab (h-lab), Karlsruhe Institute of Technology (KIT)), Shi Liu (Human-Centered Systems Lab (h-lab), Karlsruhe Institute of Technology (KIT)), Alexander Maedche (Human-Centered Systems Lab (h-lab), Karlsruhe Institute of Technology (KIT))

Abstract | Tags: Papers | Links:

@inproceedings{Kong2026Coempateam,

title = {CoEmpaTeam: Enhancing Cognitive Empathy using LLM-based Avatars and Dynamic Role Play in Virtual Reality},

author = {Dehui Kong (Human-Centered Systems Lab (h-lab), Karlsruhe Institute of Technology (KIT)), Martin Feick (Human-Centered Systems Lab (h-lab), Karlsruhe Institute of Technology (KIT)), Shi Liu (Human-Centered Systems Lab (h-lab), Karlsruhe Institute of Technology (KIT)), Alexander Maedche (Human-Centered Systems Lab (h-lab), Karlsruhe Institute of Technology (KIT))},

url = {https://h-lab.win.kit.edu/, website

https://youtu.be/WlE8jhRTFps, full video

https://www.linkedin.com/in/dehui-kong-0aa8a7306/, author's linkedin},

doi = {10.1145/3772318.3790389},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {Cognitive empathy, the ability to understand others‘ perspectives, is essential for effective communication, reducing biases, and constructive negotiation. However, this skill is declining in a performance-driven society, which prioritizes efficiency over perspective-taking. Here, the training of cognitive empathy is challenging because it is a subtle, hard-to-perceive soft skill. To address this, we developed CoEmpaTeam, a VR-based system that enables users to train their cognitive empathy by using LLM-driven avatars with different personalities. Through dynamic role play, users actively engage in perspective-taking, experiencing situations through another person's eyes. CoEmpaTeam deploys three avatars who significantly differ in their personality, validated by a technical evaluation and an online experiment (n=90). Next, we evaluated the system through a lab experiment with 32 participants who performed three sessions across two weeks, followed by a one-week diary study. Our results showed a significant increase in cognitive empathy, which, according to participants, transferred into their real lives.},

keywords = {Papers},

pubstate = {published},

tppubtype = {inproceedings}

}

Collaborative Document Editing with Multiple Users and AI Agents

Florian Lehmann (University of Bayreuth), Krystsina Shauchenka (University of Bayreuth), Daniel Buschek (University of Bayreuth),

Abstract | Tags: Papers | Links:

@inproceedings{Lehmann2026CollaborativeDocument,

title = {Collaborative Document Editing with Multiple Users and AI Agents},

author = {Florian Lehmann (University of Bayreuth), Krystsina Shauchenka (University of Bayreuth), Daniel Buschek (University of Bayreuth),},

url = {https://www.hciai.uni-bayreuth.de, website},

doi = {10.1145/3772318.3790648},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {Current AI writing support tools are largely designed for individuals, complicating collaboration when co-writers must leave the shared workspace to use AI and then communicate and reintegrate results. We propose integrating AI agents directly into collaborative writing environments. Our prototype makes AI use visible to all users through two new shared objects: user-defined agent profiles and tasks. Agent responses appear in the familiar comment feature. In a user study (N=30), 14 teams worked on writing projects during one week. Interaction logs and interviews show that teams incorporated agents into existing norms of authorship, control, and coordination, rather than treating them as team members. Agent profiles were viewed as personal territory, while created agents and outputs became shared resources. We discuss implications for team-based AI interaction, highlighting opportunities and boundaries for treating AI as a shared resource in collaborative work.},

keywords = {Papers},

pubstate = {published},

tppubtype = {inproceedings}

}

Connected Material Experiences using Bimanual Vibrotactile Crosstalk in Virtual Reality

Nihar Sabnis (Sensorimotor Interaction Group, Max Planck Institute for Informatics, Germany), André Zenner, Erik Peralta Løvaas (Sensorimotor Interaction, Max Planck Institute for Informatics),

Abstract | Tags: Papers | Links:

@inproceedings{Sabnis2026ConnectedMaterial,

title = {Connected Material Experiences using Bimanual Vibrotactile Crosstalk in Virtual Reality},

author = {Nihar Sabnis (Sensorimotor Interaction Group, Max Planck Institute for Informatics, Germany), André Zenner, Erik Peralta Løvaas (Sensorimotor Interaction, Max Planck Institute for Informatics),},

doi = {10.1145/3772318.3790767},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {Perceiving material properties such as elasticity, flexibility, and torsion is inherently bimanual, as we rely on the relative motion of our hands to form a unified sense of materiality. Yet, most vibrotactile material rendering approaches are limited to a single hand or finger. While prior work has explored bimanual haptic interfaces, most depend on specialized hardware for specific interactions. In this paper, we demonstrate design strategies to support bimanual material exploration through motion-coupled vibrotactile feedback. Our technique introduces variable crosstalk between the controllers' vibration to evoke connectedness, making two unconnected devices feel as though they manipulate a single object. The technique generalizes motion-coupled feedback approaches beyond previous single-point explorations. Through two user studies, we show that this approach (1) significantly enhances perceived connectedness and (2) conveys distinct material qualities such as elasticity and torsion. Finally, we present Dvihastīya, an authoring tool for designing connected bimanual experiences in virtual reality.},

keywords = {Papers},

pubstate = {published},

tppubtype = {inproceedings}

}

Contextualizing Public Interfaces for Meaningful Human-Environment Interactions with Traces in Use

Linda Hirsch (Social Emotional Technology Lab, University of California Santa Cruz, Santa Cruz, California, United States), Dr Marius Hoggenmüller (Design Lab, School of Architecture, Design, Planning, The University of Sydney, Sydney, NSW, Australia), Prof. Dr. Andreas Martin Butz (LMU Munich, Munich, Germany, Louisa Sophie Bekker (LMU Munich, Munich, Germany), Sarita Maria Sridharan (LMU Munich, Munich, Germany), Ceenu George (Chair of Human-Computer Interaction, TU Berlin, Berlin, Germany)

Abstract | Tags: Journal | Links:

@inproceedings{Hirsch2026ContextualizingPublicb,

title = {Contextualizing Public Interfaces for Meaningful Human-Environment Interactions with Traces in Use},

author = {Linda Hirsch (Social Emotional Technology Lab, University of California Santa Cruz, Santa Cruz, California, United States), Dr Marius Hoggenmüller (Design Lab, School of Architecture, Design and Planning, The University of Sydney, Sydney, NSW, Australia), Prof. Dr. Andreas Martin Butz (LMU Munich, Munich, Germany, Louisa Sophie Bekker (LMU Munich, Munich, Germany), Sarita Maria Sridharan (LMU Munich, Munich, Germany), Ceenu George (Chair of Human-Computer Interaction, TU Berlin, Berlin, Germany)},

url = {https://www.hci.tu-berlin.de/, website},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {Meaningful interactions positively impact users' meaning-making and feeling socially and culturally connected with their surroundings. However, creating such interactions is a continuous, complex challenge. We present the Traces in Use design concept supporting interface contextualization for meaningful human-environment interactions in public places. The concept was developed and evaluated in three steps: I) exploring traces of use characteristics as inspiration for design, II) introducing the concept definition, its theoretical evaluation, and a supporting framework, and III) practically evaluating the concept by developing, contextualizing, and testing three interfaces (a lion interface, a drum, and a storyteller) in two empirical field studies (N=40). The results show that the concept promotes meaningful interaction by supporting users' feelings of socio-cultural connectedness and meaning-making. With this, our work contributes the Traces in Use design concept, its development, and its methodological application for meaningful human-environment interactions and interface contextualization in public places.},

keywords = {Journal},

pubstate = {published},

tppubtype = {inproceedings}

}

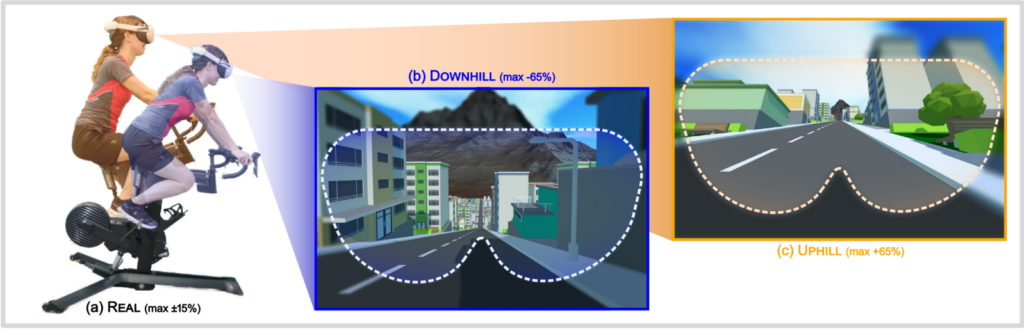

Determining Perception Thresholds for Real and Virtual Inclinations While Cycling in Virtual Reality

Jonas Keppel (University of Duisburg-Essen), Marvin Prochazka (University of Duisburg-Essen), Stefan Lewin (University of Duisburg-Essen), Markus Stroehnisch (University of Duisburg-Essen), Marvin Strauss (University of Duisburg-Essen), André Zenner (Saarland University, DFKI), Donald Degraen (University of Canterbury), Andrii Matviienko (KTH Royal Institute of Technology), Stefan Schneegass (University of Duisburg-Essen)

Abstract | Tags: Papers | Links:

@inproceedings{Keppel2026DeterminingPerception,

title = {Determining Perception Thresholds for Real and Virtual Inclinations While Cycling in Virtual Reality},

author = {Jonas Keppel (University of Duisburg-Essen), Marvin Prochazka (University of Duisburg-Essen), Stefan Lewin (University of Duisburg-Essen), Markus Stroehnisch (University of Duisburg-Essen), Marvin Strauss (University of Duisburg-Essen), André Zenner (Saarland University and DFKI), Donald Degraen (University of Canterbury), Andrii Matviienko (KTH Royal Institute of Technology), Stefan Schneegass (University of Duisburg-Essen)},

url = {https://hci.informatik.uni-due.de/, website

https://de.linkedin.com/company/hci-group-essen, lab's linkedin

https://www.facebook.com/HCIEssen, facebook

https://youtu.be/eT_CP7vTleY, full video},

doi = {10.1145/3772318.3791538},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {In virtual reality (VR) experiences, mismatches between reality and virtuality are usually undesirable, as they can disrupt immersion and induce cybersickness. However, when carefully controlled, they may expand the design space of VR. This research investigates perceptual detection thresholds for mismatches between real and virtual inclinations during cycling in VR. Using a custom simulation},

keywords = {Papers},

pubstate = {published},

tppubtype = {inproceedings}

}

Development, Evaluation, and Implementation of SEQR – a Usable Secure QR code Scanner

Mattia Mossano (Karlsruhe Institute of Technology), Maxime Fabian Veit (Karlsruhe Institute of Technology), Tobias Länge (Karlsruhe Institute of Technology), Benjamin Maximilian Berens (Karlsruhe Institute of Technology), Filipo Sharevski (DePaul University), Melanie Volkamer (Karlsruhe Institute of Technology)

Abstract | Tags: | Links:

@inproceedings{Mossano2026DevelopmentEvaluation,

title = {Development, Evaluation, and Implementation of SEQR – a Usable Secure QR code Scanner},

author = {Mattia Mossano (Karlsruhe Institute of Technology), Maxime Fabian Veit (Karlsruhe Institute of Technology), Tobias Länge (Karlsruhe Institute of Technology), Benjamin Maximilian Berens (Karlsruhe Institute of Technology), Filipo Sharevski (DePaul University), Melanie Volkamer (Karlsruhe Institute of Technology)},

url = {https://secuso.aifb.kit.edu/, website

https://www.linkedin.com/company/secuso-research-group/, lab's linkedin

www.linkedin.com/in/mattiamossano, author's social media

https://bsky.app/profile/secusoresearch.bsky.social, bluesky

https://mastodon.social/@SECUSO_Research@baw%C3%BC.social, mastadon},

doi = {10.1145/3772318.3793213},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {QR codes are widely used, but can become the vector of phishing attacks (QRishing). To support users, we systematically developed a usable secure QR code scanner, SEQR (Security Enhanced QR code scanner). We based the SEQR's design on two systematic reviews: (i) of academic literature (2015–2025), identifying 96 papers on QRishing, and (ii) of the MITRE ATT&CK Mobile repository, finding 36 QRishing techniques. From these two sources, we categorized 60 potential attacks, and divided them between those that SEQR addresses only at the technology level, and those where SEQR involves the users in the decision. We evaluated SEQR effectiveness in thwarting attacks in a between-subjects online study (n=556), where SEQR achieved 93.35% correct answers, compared to 75.24% for the Apple iOS QR code scanner and 65.11% for the Samsung Android QR code scanner. We implemented SEQR as an open source Android application, available on GitHub.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Do Citizens Agree with the EU AI Act? Public Perspectives on Risk and Regulation of AI Systems

Gabriel Lima (Max Planck Institute for Security, Privacy), Gustavo Gil Gasiola (Karlsruhe Institute of Technology), Frederike Zufall (Karlsruhe Institute of Technology, Waseda Institute for Advanced Study), Yixin Zou (Max Planck Institute for Security, Privacy)

Abstract | Tags: Papers | Links:

@inproceedings{Lima2026CitizensAgree,

title = {Do Citizens Agree with the EU AI Act? Public Perspectives on Risk and Regulation of AI Systems},

author = {Gabriel Lima (Max Planck Institute for Security and Privacy), Gustavo Gil Gasiola (Karlsruhe Institute of Technology), Frederike Zufall (Karlsruhe Institute of Technology, Waseda Institute for Advanced Study), Yixin Zou (Max Planck Institute for Security and Privacy)},

url = {https://thegcamilo.github.io/, website

https://www.linkedin.com/in/gabriel-lima-531b271a0/, author's linkedin},

doi = {10.1145/3772318.3790535},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {The European Union (EU) has spearheaded the regulation of artificial intelligence (AI) with the AI Act, which regulates AI systems based on the risks they pose to fundamental rights and other protected values. AI systems that pose unacceptable risks are prohibited, high-risk AI systems must comply with mandatory requirements, and minimal risk AI systems are encouraged—but not required—to adopt voluntary standards. Motivated by concerns that the AI Act may not reflect the public's opinions, we investigate how laypeople (N=1,421) assess 48 different AI systems concerning their risk and regulation. We find that people believe all 48 AI systems pose moderate levels of risk and should be regulated (albeit without outright prohibitions). Our findings challenge the AI Act's tiered approach, showing that people might support horizontal regulation requiring minimal standards for AI systems, and provide implications for developers seeking to develop AI aligned with public expectations.},

keywords = {Papers},

pubstate = {published},

tppubtype = {inproceedings}

}

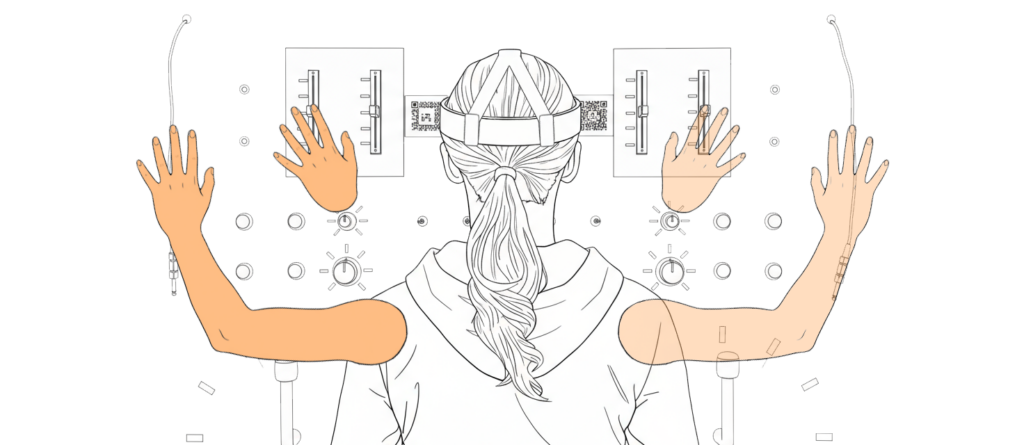

Do It Fast, Forget It Fast: How Timing and Limb Visualizations Affect First-Person Augmented Reality Instructions

Clara Sayffaerth (LMU Munich), Ehbal Ablimit (LMU Munich), Annika Köhler (University Hospital Würzburg), Jonas Wombacher (TU Darmstadt), Albrecht Schmidt (LMU Munich), Florian Müller (TU Darmstadt)

Abstract | Tags: Papers | Links:

@inproceedings{Sayffaerth2026ItFast,

title = {Do It Fast, Forget It Fast: How Timing and Limb Visualizations Affect First-Person Augmented Reality Instructions},

author = {Clara Sayffaerth (LMU Munich), Ehbal Ablimit (LMU Munich), Annika Köhler (University Hospital Würzburg), Jonas Wombacher (TU Darmstadt), Albrecht Schmidt (LMU Munich), Florian Müller (TU Darmstadt)},

url = {https://www.medien.ifi.lmu.de/, website

https://www.linkedin.com/company/lmu-media-informatics-group/, lab's linkedin

https://www.linkedin.com/in/sayffaerth/, author's linkedin},

doi = {10.1145/3772318.3791471},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {Acquiring tacit knowledge and practical skills often depends on direct observation and in situ training. AR offers an alternative by overlaying first-person step-by-step instructions that guide users through tasks such as assembly and repair. Previous work demonstrates the effectiveness of AR instruction for specific applications. In our experimental work, we systematically explore aspects of the broader design space. We conducted a controlled experiment (n = 40) to investigate three key factors identified in learning theory and XR embodiment research: imitation timing (parallel vs. sequential), limb visualization (hand vs. full arm), and limb visibility (opaque vs. semi-transparent). Across all conditions, participants followed AR instructions and afterward repeated the tasks from memory. We assessed performance, user experience, and retention. Our results show that parallel imitation is faster and increases embodiment, whereas sequential imitation enhances memory retention and comfort. Our findings provide guidance for the temporal and visual design of first-person AR tutorials.},

keywords = {Papers},

pubstate = {published},

tppubtype = {inproceedings}

}

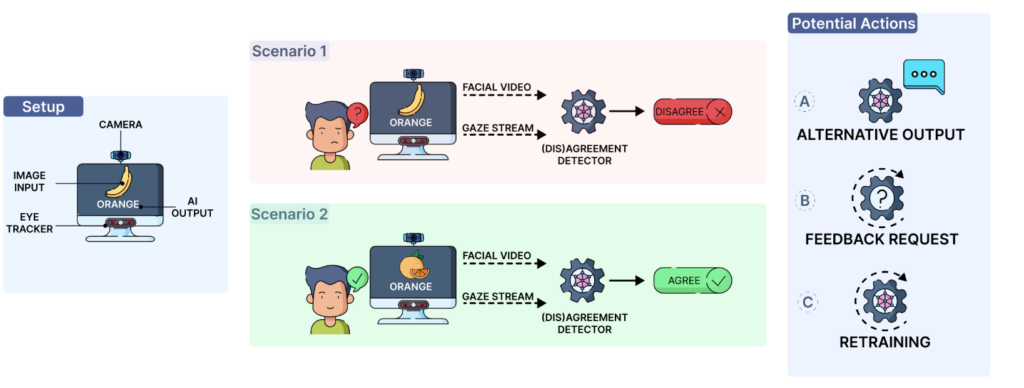

Do You (Dis)agree With Me? Modelling Implicit User Disagreement in Human–AI Interaction Using Gaze Data

Abdulrahman Mohamed Selim (German Research Center for Artificial Intelligence (DFKI)), Omair Shahzad Bhatti (German Research Center for Artificial Intelligence (DFKI)), Amr Gomaa (German Research Center for Artificial Intelligence (DFKI), Saarland Informatics Campus), Michael Barz (German Research Center for Artificial Intelligence (DFKI)), Daniel Sonntag (German Research Center for Artificial Intelligence (DFKI), University of Oldenburg)

Abstract | Tags: Papers | Links:

@inproceedings{Selim2026DisagreeWith,

title = {Do You (Dis)agree With Me? Modelling Implicit User Disagreement in Human–AI Interaction Using Gaze Data},

author = {Abdulrahman Mohamed Selim (German Research Center for Artificial Intelligence (DFKI)), Omair Shahzad Bhatti (German Research Center for Artificial Intelligence (DFKI)), Amr Gomaa (German Research Center for Artificial Intelligence (DFKI) and Saarland Informatics Campus), Michael Barz (German Research Center for Artificial Intelligence (DFKI)), Daniel Sonntag (German Research Center for Artificial Intelligence (DFKI) and University of Oldenburg)},

url = {https://iml.dfki.de/, website

https://www.linkedin.com/in/abdulrahmanselim, author's linkedin},

doi = {10.1145/3772318.3790594},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {The widespread use of generative AI has led to increased focus on human–AI interaction. However, AI systems can generate unexpected outputs, leading to disagreement or human–AI conflict. This paper focuses on modelling user disagreement using machine learning (ML) by observing users' implicit viewing behaviour. We conducted a controlled study with 30 participants evaluating captions from a simulated ML image-captioning system. Participants indicated agreement or disagreement with each caption while we recorded their gaze and facial-expression data, which we used to predict (dis)agreement. We show that unimodal gaze-based personalised modelling (0.684 average balanced accuracy) outperforms generalised modelling (0.570), whereas multimodal approaches did not improve performance. Our exploratory post hoc gaze-based analysis highlights the importance of feature selection and temporal dynamics, which help guide system design and future work. We release the dataset to support reproducibility and further work. Due to the nature of this research, we also discuss the potential ethical and privacy implications of continuous passive gaze and facial monitoring.},

keywords = {Papers},

pubstate = {published},

tppubtype = {inproceedings}

}

Effects of Small Latency Variations in 2D Target Selection Tasks

Andreas Schmid (University of Regensburg), Isabell Röhr (University of Regensburg), Martina Emmert (University of Regensburg), Niels Henze (University of Tübingen), Raphael Wimmer (University of Regensburg)

Abstract | Tags: Papers | Links:

@inproceedings{Schmid2026EffectsSmall,

title = {Effects of Small Latency Variations in 2D Target Selection Tasks},

author = {Andreas Schmid (University of Regensburg), Isabell Röhr (University of Regensburg), Martina Emmert (University of Regensburg), Niels Henze (University of Tübingen), Raphael Wimmer (University of Regensburg)},

url = {https://www.uni-regensburg.de/informatik-data-science/fakultaet/einrichtungen/medieninformatik/, website},

doi = {10.1145/3772318.3791712},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {Systems' latency — the time between user input and system response — slows down the human-computer interaction loop. Several studies revealed negative objective and subjective effects of high latency, typically treating latency as a constant delay. Because latency varies significantly in practice, recent work also assessed the effects of large and sudden latency changes. In practice, however, latency variations are small but frequent. As the effects of such variations are unclear, we investigate how small latency variations (+/-50 ms) affect users' performance and perceived task load for 2D target selection tasks with static and moving targets. For static targets, we found that latency variation causes significantly higher completion times and less efficient trajectories, however with small effect sizes. In contrast, we found no significant effects on any performance measure for moving targets. Our findings indicate that the effect of latency variation is generally very small and quickly disappears for non-trivial tasks.},

keywords = {Papers},

pubstate = {published},

tppubtype = {inproceedings}

}

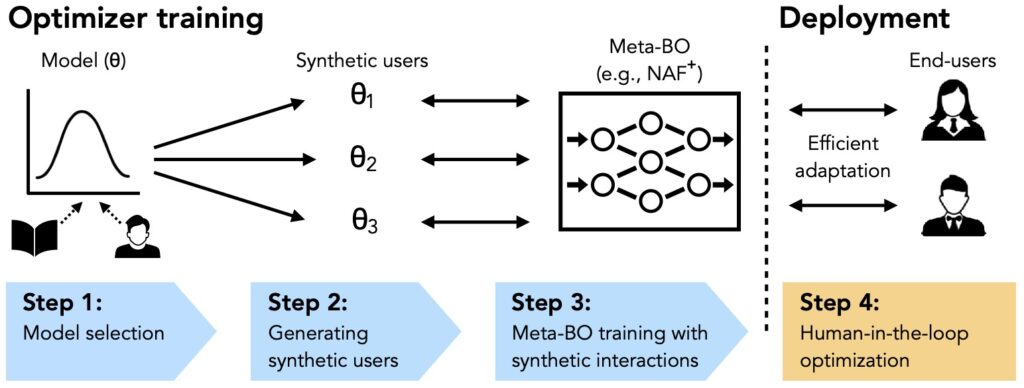

Efficient Human-in-the-Loop Optimization via Priors Learned from User Models

Yi-Chi Liao (Saarland University & ETH Zurich), Jõao Belo (Saarland University), Hee-Seung Moon (Chung-Ang University), Jürgen Steimle (Saarland University), Anna Feit (Saarland University)

Abstract | Tags: Papers | Links:

@inproceedings{Liao2026EfficientHumanintheloop,

title = {Efficient Human-in-the-Loop Optimization via Priors Learned from User Models},

author = {Yi-Chi Liao (Saarland University & ETH Zurich), Jõao Belo (Saarland University), Hee-Seung Moon (Chung-Ang University), Jürgen Steimle (Saarland University), Anna Feit (Saarland University)},

url = {https://cix.cs.uni-saarland.de/, website

https://hci.cs.uni-saarland.de/, website

https://www.linkedin.com/company/saarhcilab/, lab's linkedin doi=10.1145/3772318.3791976},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},

abstract = {Human-in-the-loop optimization identifies optimal interface designs by iteratively observing user performance. However, it often requires numerous iterations due to the lack of prior information. While recent approaches have accelerated this process by leveraging previous optimization data, collecting user data remains costly and often impractical. We present a conceptual framework, Human-in-the-Loop Optimization with Model-Informed Priors (HOMI), which augments human-in-the-loop optimization with a training phase where the optimizer learns adaptation strategies from diverse, synthetic user data generated with predictive models before deployment. To realize HOMI, we introduce Neural Acquisition Function+ (NAF+), a Bayesian optimization method featuring a neural acquisition function trained with reinforcement learning. NAF+ learns optimization strategies from large-scale synthetic data, improving efficiency in real-time optimization with users. We evaluate HOMI and NAF+ with mid-air keyboard optimization, a representative VR input task. Our work presents a new approach for more efficient interface adaptation by bridging in situ and in silico optimization processes.},

keywords = {Papers},

pubstate = {published},

tppubtype = {inproceedings}

}

eHMI for All - Investigating the Effect of External Communication of Automated Vehicles on Pedestrians, Manual Drivers, and Cyclists in Virtual Reality

Mark Colley (UCL Interaction Centre), Simon Kopp (Institute of Media Informatics, Ulm University), Debargha Dey (Eindhoven University of Technology), Pascal Jansen (Institute of Media Informatics, Ulm University), Enrico Rukzio (Institute of Media Informatics, Ulm University)

Abstract | Tags: Papers | Links:

@inproceedings{Colley2026EhmiAll,

title = {eHMI for All - Investigating the Effect of External Communication of Automated Vehicles on Pedestrians, Manual Drivers, and Cyclists in Virtual Reality},

author = {Mark Colley (UCL Interaction Centre), Simon Kopp (Institute of Media Informatics, Ulm University), Debargha Dey (Eindhoven University of Technology), Pascal Jansen (Institute of Media Informatics, Ulm University), Enrico Rukzio (Institute of Media Informatics, Ulm University)},

doi = {10.1145/3772318.3790585},

year = {2026},

date = {2026-04-13},

urldate = {2026-04-13},